A central commonality between culture and subjectivity is that each owes a considerable extent of its definition to the existence of mere differences between preference or taste—however arbitrary or meaningful these may turn out to be.

Tag Archives: subjectivity

A little back-and-forth on psychology (mostly)…

[Source: http://intjforum.com/showthread.php?p=5418403#post5418403]

SS=me

T=correspondent

T: I feel like psychology is too weak on its own…

SS: Ironically, actually, it is psychology’s over-reliance on the other, more established sciences (viz., biology) that has done it a disservice in the past century. Its dominating attempts to imitate precisely traditional empiricism and its methods have left it theoretically underdeveloped and disunited, so that there are a number of sub-disciplines making up ‘psychology’, without any coherent framework making their connections–and, thus, the status of psychology as a grounded and self-consistent science–clear, to either its insiders or those looking from without.

T: I don’t see over-reliance being an issue. Fields separate because of expertise and imaginary divisors of what is connected to what (it’s all connected). The brain is small, so it’s easier to understand by partitioning.

SS: It’s fine that studies of the brain be partitioned, and additionally for disciplines to look toward others in figuring out how to best proceed themselves. But over-reliance, for psychology’s case, has made it such that it has no clear idea of what mind’s ontological status is, which is highly relevant to the extent that it should want to precisely characterize and explore the phenomenon (or, perhaps more accurately: its sub-phenomena) on its own terms.

Mind is not–in any obvious, or hitherto successfully argued-for way–‘physical’ in the same sense that every other physical object that has been studied scientifically has been shown to be. On the other hand, if its operations and contents actually are directly (i.e., not relying solely on measures of brain activity) detectable via physical methods, then it must be admitted that we currently lack the technology with which to achieve such. (Whereas for formerly-discovered phenomena of at least a somewhat similar nature, w/r/t their raising for us measurability problems–e.g., the invisible parts of the electromagnetic spectrum–further technological advancement was needed in order to actually establish their physical reality.)

Gravity, of course, would be another example of a postulated force with causal potency in relation to the known-about physical realm, but that is still undetectable in a way that has prevented us from knowing exactly what kind of physical substance it is or is made up of. (Assuming that it is, indeed, ‘physical’ in the traditional sense of possessing measurable energy or discrete constituents of matter.)

T: I’m hoping computational cognition becomes a field in the future, once we have cracked the puzzle, which will be accomplished in the next few years.

SS: Cognitive science is already computational.

T: That’s true, but it’s not authentic, nor is there even a basic mind that’s capable of meaningful use by means of artificial self-awareness.

SS: I’m not really sure what you mean by cognitive science’s treatment of mind not being “authentic”, though it certainly is incomplete at present. Perhaps that we haven’t (yet) established sentience/self-awareness in artificial/computational models of mind? If this is what you are getting at, the intellectual or empirical proof of any entity being sentient/self-aware is a highly complex problem, particularly the more we look at species (or, perhaps and for some, things like computers) that are not human.

T: Deep learning is about as sophisticated as it gets, and it’s not really even that impressive. The brain is highly parallel and we’ve only just begun to get the hardware that is matching it. Graphics cards follow this structure.

SS: Yes, and neural nets. Though those have been around for quite a while, and are extremely simplistic when compared to the actual human brain.

Again, though, the mind’s computational aspects have been overemphasized (since at least the cognitive revolution of the ’70s–though the story may well have started earlier, solidifying most definitively during psychology’s behaviorist phase) at the expense of a rigorous and fully-general understanding of what mind actually is. For the most part, cognitive psychologists don’t study and have not studied philosophy, which has also meant that they are largely unaware of 1) just how relevant the mind-body problem is to their work, and 2) how multi-faceted and complex mind truly is.

T: We are going to have to agree to disagree. I see nothing special about the mind that differs from any model of computation. Are you not in favor of the Turing Machine model that’s theorized to compute anything that a human brain can?

SS: Well, independent of my own views on mind, that a Turing machine can compute “anything” a human brain can is so far promissory note, at most. (How do we come up with an exhaustive list of all of the computations the human mind is capable of performing? At what point or how could we know whether such would be complete?) As is the general assumption that mental phenomena are, or will be found to be governed by the same natural laws that physical ones are.

T: As far as what is computable by the human brain, it is mostly assumed at this time.

[…]

T: Philosophy or philosophical approaches is/are useful in any discipline. Consciousness is a problem for metaphysicians. The “study of behavior” is not deep enough, but it’s a good starting point.

SS: Consciousness has become a problem for quite a few more groups than metaphysicians, hence its currently being a highly interdisciplinary field (consisting of researchers from A.I.; phenomenology; cognitive neuroscience; philosophy; physics; computer science…). As metaphysics has for so long proven incapable of solving the hard problem of consciousness (or even characterizing it in a way that would make it more amenable for other disciplines), it makes more sense now than ever that the problem should be treated in such a multi-plural manner.

T: All of those prior to the precomputational era are exempt. Metaphyisicans up from the 1900s are the only ones that count. To date, none of them are particularly impressive. As philosophy has been phased out somewhat, it’s slowly coming back, for good reason.

[…]

SS: No cracking the puzzle of mind without understanding just what it is and how it works, which may hinge on the extent to which progress is made on the question of how subjective experience (which has to be central to any complete science of mind) and the body are related: in addition to how the former is even possible, and what its nature (history; universal scope; dynamics…) is. We are not close to an answer to this ‘hard problem’, at present, and so the hope that it will be answered successfully “in the next few years” seems preposterous to the extreme.

T: I refuse to believe it’s as difficult as people make it out to be. Humans are emotionally driven, it’s not that hard. Emotionally Oriented Programming as a humorous analogy. After that, it’s simply an amalgam of networks that are extremely, extremely complex.

SS: You can refuse it all you like, but the fact is that it really has been so difficult for the vast majority of people who have studied and are studying mind.

Emotion is a part of the puzzle (and one that has been pretty neglected by psychology, and especially by cognitive science), but it is not by any means the only or necessarily biggest part of it.

T: Emotions are an evolutionary advantage, a synthetic approach would be a network that precedes and influences the logical network and determines how well it functions based on the load (whether too high or low) in its various sub-networks put on this artificial emotion network.

SS: I haven’t studied the question of interactive emotional-logical computational systems much myself, but here is something related in case you are unaware of it: https://en.wikipedia.org/wiki/Affective_computing

[…]

T: Subjective and objective are really not any different. It’s an elaborate illusion.

I can’t believe no ones figured that out yet.

SS: Believe it.

The subjective/objective question is still more in the domain of philosophy, and less within consciousness studies and cognitive science (though it is significantly more present in the former of these two than the latter).

Re. the subject-object split being an “illusion”, Eastern traditions (e.g., Buddhism) have held this view for centuries, and Western thought has recently begun to catch on to it, as evidence continues to mount in disfavor of Descartes’ original material body-immaterial soul(/mind) separation.

It is still an open question, however, whether or not subjective and objective may still be meaningfully distinguished in certain ways (or for certain reasons).

T: Dualism is just [stupid]. I won’t dismiss it though when dealing with whatever a “soul” can be, but for everything else, it’s unnecessary. Not including it won’t even compromise computation.

You can give the credit to any previous schools of thought, but the fact is, they’re not necessary for solving this puzzle. Plenty of people have said wise things, yet those wise things on their own are meaningless until given proper application.

[…]

T: Subjectivity is not much more than an internalized world shaped by language processing of that mind/body and feeling of mind/body. Due to diversity of parameters, each of us differs.

SS: If subjectivity really were “not any different” from objectivity, as you asserted above, then it would be much more than a mere “internalized world”. In consciousness studies, the problem of subjectivity is the ‘hard problem of consciousness’:

“The really hard problem of consciousness is the problem of experience. When we think and perceive, there is a whir of information-processing, but there is also a subjective aspect. As Nagel (1974) has put it, there is something it is like to be a conscious organism. This subjective aspect is experience. [emphasis added]“ (Chalmers, 1995: Facing Up to the Problem of Consciousness)

[…]

T: Of course, all of this is meaningless without a proper model for a basic mind, which is achieved after stripping the senses. Adding them back on simply adds potential for freedom and further learning.

SS: How could the senses possibly be 100%-stripped in a way that would leave what would presumably be left of the mind intact? It seems dubious that the (self-aware) mind as we are familiar with it could exist at all in this purely ‘senseless’ kind of manner.

T: A mind would be intact, but if the mind in this example would be mirrored from birth, it’s not a worthwhile exercise. A baby with no senses would lead to much at all. Not much would be learned.

It’s simply a process akin to reverse engineering, by trial-and-error. It’s kind of cheating, since adults have already accumulated lots of data (through experience/time).

The major issue is mostly “what is reality?”, not “what is subjectiveness?”. “What is mind?” is what naturally follows thereafter. Tying the t[w]o together, “what is complexity?”. “What is emerging complexity?” Although they are related, it’s clear to me at least, that subjectivity isn’t the right starting point, but rather a red herring.

[…]

SS: Personally, I think cognitive neuroscience (which has largely come to subsume psychology’s studies of mind) and cognitive-behaviorism (psychology’s current ‘paradigm’, if one may roughly speak of one) will have a lot to learn in particular from quantum physics; philosophy; and consciousness studies (e.g., phenomenology), if psychology and cognitive science are to have any hope of meaningfully contributing toward solving the mind-body problem. Computational theories have helped and conceivably will continue to do so, but their limits–which one need only look at artificial intelligence’s history from the past century to appreciate–will have to be acknowledged, before mind can be defined as accurately, and characterized as faithfully as it will ultimately need to be for our genuine understanding.

T: Quantum computers offer specialized processing for different areas of computation. Whether that relates to the mind or not remains to be determined. I suspect that the processes it would help are nonetheless not important for our starting goal, but essential for reaching higher peaks of intelligence.

SS: Intelligence is a problem I won’t go into, here; but, it will be interesting to see what potential new possibilities quantum computing will open up for cognitive science, as the former becomes more developed and as the two fields are synthesized.

T: Intelligence is one of the most key factors in solving this issue, but what the word encapsulates is clearly its own problem.

[To be continued…?]

Textual analysis (or hermeneutic work) as “all subjective”

This belief is rather easily explained away (though, sadly: not so easily disposed of, for the complacent offenders and “their” n=30 “subjects”!). It stems merely from a lack of correct understanding of differing methodologies, and their correspondences with prima facie differing practices.

Take my words, here. You are reading them. But are you reading me–my intent, my desires; and so on? If not, you are committing what I take to be the essential fallacy of the most literalizing scientists and analytic philosophers, who all fail to appreciate the proper way to arrive at another person’s meaning. For, if one does not understand what something means to the speaker–or, indeed, to any of their possibly-billions of listeners–one will forever be trapped and mired in his, her, or hir own “subjective” (in this case, impoverished as-such) meaning, distinct from and un-legitimized by one’s fellow beings in the world. Indeed: what a “meaning”!

For such a person, inter-subjectivity forever remains a mystery; coherent sociality at all willfully mystifies them, and what is left to mystify one will ultimately block one from becoming the best they can possibly be–whether “for themselves, or others”. (These quotes are necessary: for they hint at the absolute absurdity of the classical I-,-rather-than-thou formulation!)

In short, the one who instinctively dismisses hermeneutic work as “all subjective–and therefore useless” operates with a distinct lack of empathy: of caring for the immeasurable relativity of meaning among their “fellow” beings; of enriching subjectivity, generally; of truly understanding and connecting–and, henceforth, of caring for “him-, “her-, or “hir-self”.

Cling not to the dreaded “to the man!” “fallacy” quite so dearly, my friend–dialogical achievement is necessarily both art and science! Admit to a broader set of fallacies than have been so thoughtlessly inculcated: and tuck away that dirtied monologizing monocle, if only for the mere moment, good madams and sirs–

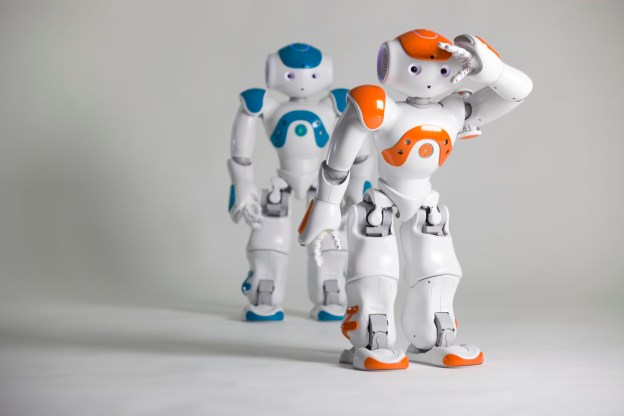

“Are we approaching robotic consciousness?” (video response)

[Original post: http://intjforum.com/showpost.php?p=5159026&postcount=2%5D

Video:

My thoughts (I’m ‘S’)—

Video: King’s Wise Men/NAO demonstration

S: Impressive. I’d be interested to see what future directions the involved researchers take things.

Prof. Bringsjord: “By passing many tests of this kind—however narrow—robots will build up and collect a repertoire of abilities that start to become useful when put together.”

S: Didn’t touch on how said abilities could be “added up” into a potential singular (human-like) robot. Though Bringsjord doesn’t explicitly seem to be committing to such, the video’s narrator himself claims to see it as “much like a child learning individual lessons about its actual existence”, and then “putting what it learns all together”; which is the same sort of reductionist optimism that drove and characterized artificial intelligence’s first few decades of work (before the field realized its understandings of mind and humanness were sorely needing). So the narrator lverbally) endorses the view that adding up robotic abilities is possible within a single unit, which I have yet to see proof or sufficient reason to be confident of.

(Being light, for a moment: Bringsjord could well have a capitalistic, division-of-labor sort of robotic-societal scenario ready-at-mind in espousing statements like this…)

Narrator: “The robot talked about in this video is not the first robot to seem to display a sense of self.”

S: ‘Self’ is a much trickier and more abstract notion to handle, especially in this context. No one in the video defines it, or tries to say whether or how it’s related to sentience or consciousness (defined in the two ways the narrator points to), and few philosophers and psychologists have done a good job with it as of yet, either. See Stan Klein’s work for the best modern treatment of self that I’ve yet come across.

Video: Guy with the synthetic brain

S: Huh…alright–neat, provided that’s actually real. Sort of creepy (uncanny valley, anyone?), but at least he can talk Descartes…not that I know why anyone would usefully care to do so, mind, at this specific point of time in cognitive science’s trajectory.

Dr. Hart: “The idea requires that there is something beyond the physical mechanisms of thought that experiences the sunrise, which robots would lack.”

S: Well, yeah: the “physical mechanisms of thought” don’t equal the whole, sum-total experiencer. Also, I’m not sure what he means by something being “beyond” the physical mechanisms of thought…sort of hits my ears as naive dualism, though that might only be me tripping on semantics.

Prof. Hart (?): “The ability of any entity to have subjective perceptual experiences…is distinct from other aspects of the mind, such as consciousness, creativity, intelligence, or self-awareness.”

S: Not much a fan of treating creativity and intelligence as “aspects of the mind”…same goes for consciousness, for hopefully more-obvious reasons. Maurice Merleau-Ponty is the one to look into with respect to “subjective perceptual experiences”, specifically his Phenomenology of Perception.

Narrator: “No artificial object has sentience.”

Well, naturally it’s hard to say w/r/t their status of having/not having “subjective perceptual experience”, but feelings are currently being worked on in the subfield of affective computing. (There’s still much work re. emotion to be done in psychology and the harder sciences before said subfield can *really* be considered in the context of robotic sentience, though.)

Narrator: “Sentience is the only aspect of consciousness that cannot be explained…many [scientists] go as far as to say it will never be explained by science.”

S: They may think so, and perhaps for good philosophical reasons; but that won’t stop, and indeed isn’t stopping some researchers from trying.

Narrator: “Before this [NAO robot speaking in the King’s Wise Men], nobody knew if robbots could ever be aware of themselves; and this experiment proves that they can be.”

Aware of themselves again leads to the problem briefly alluded to above, regarding the philosophical and scientific impoverishment of the notion of ‘self’. I know what the narrator is attempting to get at, but I still believe this point deserves pushing.

Narrator: “The question should be, ‘Are we nearing robotic phenomenological consciousness?’”

S: Yep! And indeed, you have people like Hubert Dreyfus arguing for “Heideggerian AI” as a remedy for AI’s current inability to exhibit “everyday coping”, i.e. operating with general intelligence and situational adaptability in the world (or “being-in-the-world”, a la Heidegger).

In cognitive science terms, this basically boils down to the main idea underlying embodied cognition, a big move away from the old Cartesian or “representational” view of mind.

Narrator: “When you take away the social constructs, categories, and classes that we all define ourselves and each other by, and just purely looking at what we are as humans and how incredibly complex we are as beings, and how remarkably well we function in a way, actually really amazing, and kind of beautiful, too…so smile, because being human means that you’re an incredible piece of work.”

S: I wish the narrator would have foregone the cheesy-but-necessary-for-his-documenting-purposes part about humans’ beauty and complexity, in favor of going a bit further into the obviously difficult and tricky territory of “social constructs, categories, and classes” that we “all define ourselves and each other by”: and how near or far robots can be said to be from having truly human-like socio-cultural sensibilities and competencies.